A couple of weeks ago, I became the target of an online propaganda group defending the interests of Ripple, called the XRP Army. After studying their Twitter activity, I figured out their tactics, and came up with a way to identify the group’s members. Then, I applied my process to other online groups. This is what I’ve learned about online propaganda groups (OPGs).

Lately, mentions of propaganda on social media has been mostly linked to election tampering, and the Kremlin web brigades. However, online propaganda groups (OPGs) aren’t necessarily politically motivated, nor are they created by a central authority. Instead, they may emerge without any central coordination, as has happened during the Gamergate. Individuals who have never met each other in real life, can spontaneously form self-motivated groups of activists, and act together.

Twitter in particular, with its open platform, enables the members of such groups to follow (and follow up on) each other’s actions, in something that ends up looking like a coordinated attack.

Let’s dive into one particular case study:

Crypto shills turn into hounds

Twitter’s open platform made it the social network of choice for promoting cryptocurrencies. Every coin has its own community, whose members praise the supposed qualities of their coin, and trash the obvious shortcomings of everyone else’s coins. A common financial motivation incentivises the members of each community to stick together, and support one another.

Following the great crypto crash of 2018, human nature being what it is, these communities witnessed the emergence of aggressive sub-groups, eager to attack anyone who might criticise their beloved coin. Just have a look at the Twitter feeds of Nouriel Roubini, Frances Coppola, or Izabella Kaminska.

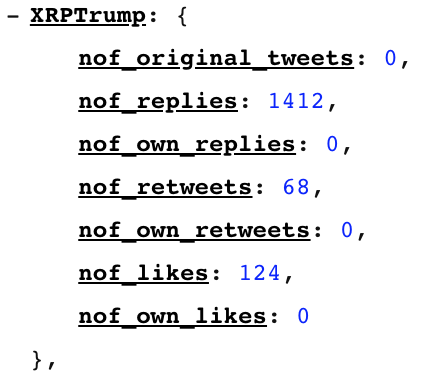

After being myself attacked by an aggressive group of Ripple supporters who like to call themselves the “XRP Army”, I pieced together a quick and dirty algorithm to crawl Twitter, and identified its members:

“Combining these social weapons together, the #XRPArmy will simply burry its target under an avalanche of biased and false statements, insults and threats, making up for what it lacks in brains with volume.”https://t.co/6niY7c9pqu

— Trolly McTrollface (@Tr0llyTr0llFace) February 6, 2019

Then, I tried to use this exact same algorithm to dig deeper, and research other groups as well. The result of this investigation is a model of OPG modus operandi.

Identifying the members of an online propaganda group

Not every person who has invested in the XRP coin, and who expresses his/her support for Ripple, is a member of the XRP Army. Most people who post on Twitter do so for genuine reasons, and don’t think much of the consequences. OPGs, on the other hand, orchestrate their activity with the objective of artificially altering other peoples’ opinion around a specific issue.

The relationship between the members of an OPG is built around two core characteristics.

Wolf-pack behaviour

Members of an OPG never attack alone. They move in on their prey in a pack, and attack their target using the following tools:

- biased arguments

- taking the original statement out of context

- ad hominem attacks, example: questioning the author’s credentials

- generic insults

- threats

They almost never initiate a discussion, but rather elect to leech onto an original post, and bury it under negative replies. If the author ventures to defend his/her post, OPG members will bury every one of the author’s replies under ever more attacks, until the author is drowning in a sea of mentions and notifications.

Self-motivating behaviour

Members of an OPG tend to like and share/retweet each other’s posts, with three objectives in mind:

- encourage one another

- build an appearance of credibility to their attack (Twitter’s algorithms show the replies with the most likes/retweets first)

- spread the word about an ongoing attack to the other members of the OPG (Twitter shows your retweets much more often that replies, in the feeds of people who follow your account)

As we’ll see later, these two tasks (attack and motivate) are often performed by different members of an OPG. Likes and retweets can easily be produced by bots to build credibility, for example. However, content producers might also like and retweet, because they need to spread the word about the ongoing attack.

Crawling Twitter

This whole post in the result of my data-based research into Twitter user behaviour. I elected to look into four crypto communities, as suggested by those who commented to my original post about the XRP Army: Ripple (again, but with more detail), EOS, TRON, and IOTA.

More information about how I got my data from Twitter can be found on the TrollKill parser status page, including the source code. I had to write my own crawler, because even though I applied for a Twitter Developer API key almost a week ago now, and still haven’t heard from them to this day.

After downloading enough data (I noticed that my algorithm started to converge with ~100,000 data points, i.e. retweets, replies, and likes), I applied an unsupervised clustering algorithm that I came up with a few years ago, when I was looking for a way to automatically group together news articles by topic and event.

Grouping soldiers into battalions

Let’s start with a little bit of semantics. The Ripple OPG that attacked me had elected to call itself “The XRP Army”. So, if a single member of an OPG is a “soldier”, my clustering algorithm would group them together into “battalions”.

The algorithm works as follows:

- Compute the closeness of every pair of accounts in the dataset

- Sort the pairs by order of descending closeness

- Iterate through the resulting array, grouping together accounts who don’t belong to two different clusters already

I used closeness functions that would be specific of OPG behaviour:

- The sum of interactions between both accounts (likes, retweets, and replies to one another’s posts) - a measure of self-motivating behaviour

- The number of times both accounts replied to the same tweet - a measure of wolf-pack behaviour

Every soldier and battalion would exhibit a particular behaviour. Some battalions would be false positives, which would show up in their global behaviour. For example, a battalion who produces a lot of original tweets is not part of an OPG, it’s just a group of friends who interact a lot with each other.

You can see the results of the grouping on the TrollKill Clustering Results page.

Field manual for online propaganda groups

Do you want to start an online propaganda group? Learn from experience.

Difference between normal and OPG behaviour

What does an OPG battalion look like? Well, look at this one, courtesy of the TRON coin supporters:

38 accounts, 5725 replies, mostly to their own content, and no original tweets whatsoever. The members of this group are exclusively content creators - they don’t engage in self-motivating behaviour, which could be a strong sign of real-world coordination (like in the case of Kremlin trolls).

Compare it to a group with a normal behaviour, again from TRON supporters:

The activity of this group is much better distributed, with three times as many likes as retweets, and eight times more replies than original tweets, indicative of a healthy debate.

Soldier types

An OPG will include different profiles of accounts. People naturally confine themselves to roles where they’re most comfortable:

- General: posts a lot of replies, doesn’t like / retweet much

- Messenger: retweets a lot, posts little

- Sheep: mostly likes, sometimes replies to posts from other members in the OPG, doesn’t seek exposure

The behaviour of members within an OPG will vary wildly, but the OPG as a whole will take what it needs to thrive as a whole from every single member.

Generals tend to be well known by the online community. One doesn’t need parsers or clustering algorithms to identify them:

But without their supporting types - the (mostly anonymous) messengers and sheep, they wouldn’t amount to much.

The effect of OPGs

In the case of Gamergate, OPG activity resulted in serious psychological harm for their targets, but also in a secularisation of the wider community of gamers.

In the case of cryptocurrencies, OPGs defending their own coins and attacking others, will try to silence critics, and convince unsophisticated investors to buy their coins, or at least not sell them.

OPGs kill debate, and prevent the free-flow of information. These were supposed to be the very things Internet was created for, in the first place.

Battling OPGs is complicated, for they are mostly made up of real people, who don’t exactly act against social network Terms of Service. They’re simply willing to waste unimaginable amounts of their time polluting and distorting existing conversations, protected by the anonymity of their online accounts.

How about this:

Someone (you) is anonymously posting 6 times negatively about Ripple/XRP in just 10 days.

Sorry, but really no balance there.

Is that not propaganda?

Also, why don’t you be a real general (not a sheep) and show me what your true agenda/incentive/narrative is?

You probably won’t, I guess. But deep down you understand that you won’t be seen as relevant, just as another FUD-nerd with some coding skills. Desperately in need of attention, like a real troll.

Or maybe you really think you are on a noble mission?

Then at least try to see the big picture in crypto: do want change in (some areas in) finance or not?

I do, and people like Ripple are at least trying. Perhaps they succeed, perhaps not.

You seem to want to keep the status quo, or some other Crypto or archaic bank to remain or make relevant. Or perhaps you just got too cynical about the human race already this early in life.

Either way, perhaps rethink your online contribution?

What is it what you really want?

Hi Tom. First, I’m doing all this for free, to build my brand and make a name for myself. So please, show some respect for my goodwill.

You need bulls and bears to make a market. I’m a bear.

In the long run, an asset’s price will follow its fundamental value. Unless you think that Ripple’s fundamental value depends on stuff people post on Twitter or personal blogs, Ripple will be fine.

Also understand that if I’m taking some of my time to attack and criticise Ripple, it’s because I deem it worthy of my time. If you want to make it big, real big, you have to be prepared for big praise, as well as big criticism. If nobody’s criticising you, you aren’t having much of an impact, are you?

So instead of being mad at me, you (and Ripple) should take this opportunity to ride along with the flow of public attention and communicate, be transparent about the issues I raised. Unless it just wants to dump as much XRP coins as it can before disappearing into the night.

Best,

Trolly

I’m not in any way related to Ripple. I see potential for Ripple in some financial areas and hold XRP, that’s it.

There are things I like about Ripple and things not.

So, being critical (as you are) is fine. But try to balance it. Is really everthing bad about crypto, or in this case Ripple?

The fair thing would be to acknowledge things in life are not black or white only. It’s more fine grained and complex than that, especially when adding time to the equation.

I would at least add a disclaimer to your blog site, reducing the chance that readers have to make assumptions about your intentions.

For instance, add what you are invested in and why.

One last thing, remember it is always easier to bash and troll (as in destructing) than to build (as in constructing).

hi Tom, I think it’s quite hard to be a half-believer. Either you buy into the hype, which requires a huge leap of faith, or you don’t. Personally, I see /every/ coin as a great vehicle for ponzi/pyramid schemes and nothing else. This doesn’t keep me from reading bullish stories, and I believe I have a balanced overview in this regard.

That being said: It would be nice to have more discussion instead of people just blogging.

Wow!

Hi Nancy!

A+ 10/10

While Twitter is probably hopeless and unfixable, you might want to publish your analytical algorithms as pseudocode which could be implemented for suppression of wolfpack propaganda tactics at other forums.

Thank you, a detailed answer to my earlier question about emergence of crypto fervent-group behavior. The comments are interesting too.

Great analysis - you should work for the NSA jk

You can trace these trolls to the crypto subreddits pretty easily.

I was bombarded with downvotes/hate tweets when Roubini highlighted my tweet that Cryptocurrency is the world’s largest circlejerk as I have been a Crypto skeptic since 2014. Some of the repliers in reddit have same username or same grammatical mistakes on twitter and vice versa (possibly all are Indian/Ukrainian)

You can build a graph of all repliers in you twitter battalion who also intersect with reddit crypto shills graph.

Or you can socially engineer the Mods of the subreddits saying you are being harassed to extract more info.

Another place rampant with crypto shills amis the tech anonymous ranting app -Blind